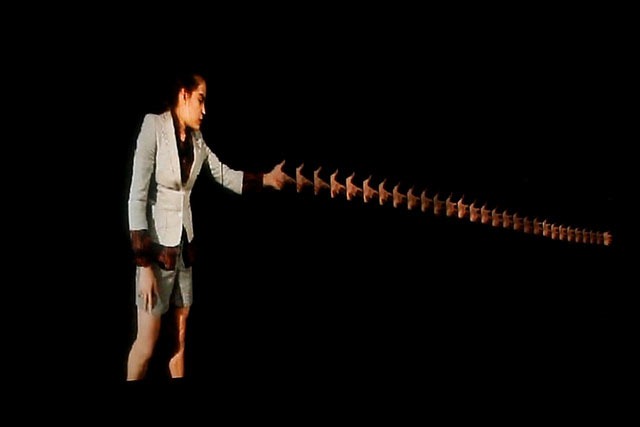

Replica, a fascinating performance art project by Jeff Howard and Alex Vessels for the Big Screens class at ITP, was exhibited on the 120 foot long video wall, at the IAC building in NYC.

Replica is a performance using live image processing to explore how the awareness of time affects the perception of self-image. Patterns unfold creating a dialogue between the performer and her representation, bringing the contrast of control and authenticity into question.

The project was developed in Processing using the Most Pixels Ever library. Video was pulled from a Canon 5D Mark II camera using Canon’s EOS utility and CamTwist to communicate with Processing. To broadcast to each of the three machines powering the video wall, Daniel Shiffman helped us figure out a way to send and receive the images using UDP. To control each of the different modes and parameters of the sketch we used a MIDI controller. The code for the project will be available on GitHub soon.

The project was performed by (the very lissome) Claire Westby, who danced to the song “Lost in the World” by Kanye West.

Watch Replica on Vimeo.

Image: cc licensed flickr photo shared by Jeff Howard